TL;DR:

- AI adoption is one of the biggest unsolved problems on a CISO’s plate, and no security tool no tool completely solves this yet.

- Fable was built from a first principles view of human risk, so we didn’t have to build a new product to solve it.

- Three use cases customers are running today: enabling new AI tools, detecting shadow AI in the moment, and grounding every briefing in your AI policy.

- Reserve your spot in the live roundtable with Jacob Berry (Field CISO, Fable), John Yeoh (CSO, Cloud Security Alliance), and Steve Tran (VP of Global Security, Iyuno).

AI adoption is the newest unsolved problem on the CISO’s plate

Last week, we made the case that AI adoption is a security imperative, not a security problem. The CISOs who win the next decade are the ones turning their teams from a department of no into a department of how.

But getting there is hard. Secure AI adoption is one of the newest and biggest unsolved problems on a CISO’s plate today.

For customers of Fable, the exciting part is that we’re already helping them solve this problem because Fable didn’t have to build a new product to help them drive secure AI adoption.

Fable’s platform was built from the ground up to understand human risk and change human behavior. That mandate stays the same whether the behavior in question is clicking a phishing link, installing a sketchy browser extension, or pasting a customer contract into the ChatGPT free tier. AI adoption didn’t happen to be a use case we could solve. It’s exactly the kind of problem the platform was designed for.

Why Fable’s platform was already pointed at this problem

At Fable, we’ve always thought about building a behavior platform, not a feature set. The platform has three properties that line up almost exactly with what safe AI adoption requires:

- Ingests thousands of signals from the tools you’ve already deployed (browser, CASB, DLP, identity, endpoint, MDM). No new integration needed to see shadow AI.

- Correlates every signal to the specific employee whose behavior needs to shift, not just to a SOC queue or a dashboard.

- Deploys customized, in the moment content automatically. A two minute video, grounded in your policy, in front of the right employee, with no manual lift from the security team.

It’s the same platform our customers already love, and the same reason their employees actually watch the content and take action. Pointing it at AI adoption wasn’t a pivot. It was the next natural use case for the platform we already built.

Below, the three use cases we’re going deep on today.

Use case 1: Enabling employees on new AI tools

Every few weeks, customers are rolling out something new. A Copilot license, a vertical legal copilot, an internal RAG agent, or a workflow that lives inside their CRM.

The launch happens. Comms go out in an all hands or a Slack thread that ages out in 48 hours. Adoption stalls. Support tickets pile up. And in the gap between we launched it and everyone is using it, shadow tools fill in.

This is where Fable comes in. We ship fresh, customizable content for the most common AI tools, pushable to the entire workforce or a specific subset of users in a single click. For the rollouts that don’t map to anything off the shelf, our Composer feature lets you generate a custom briefing, or customize an existing one, to match your specific deployment. Each briefing is automatically customized to your company’s brand and voice.

The result is something a security team can actually say out loud on launch day: Here’s the new tool. Here’s exactly how to use it. Here’s what’s off limits. Same day the tool goes live.

And very soon, you’ll be able to spin those briefings up through our video briefing agent.

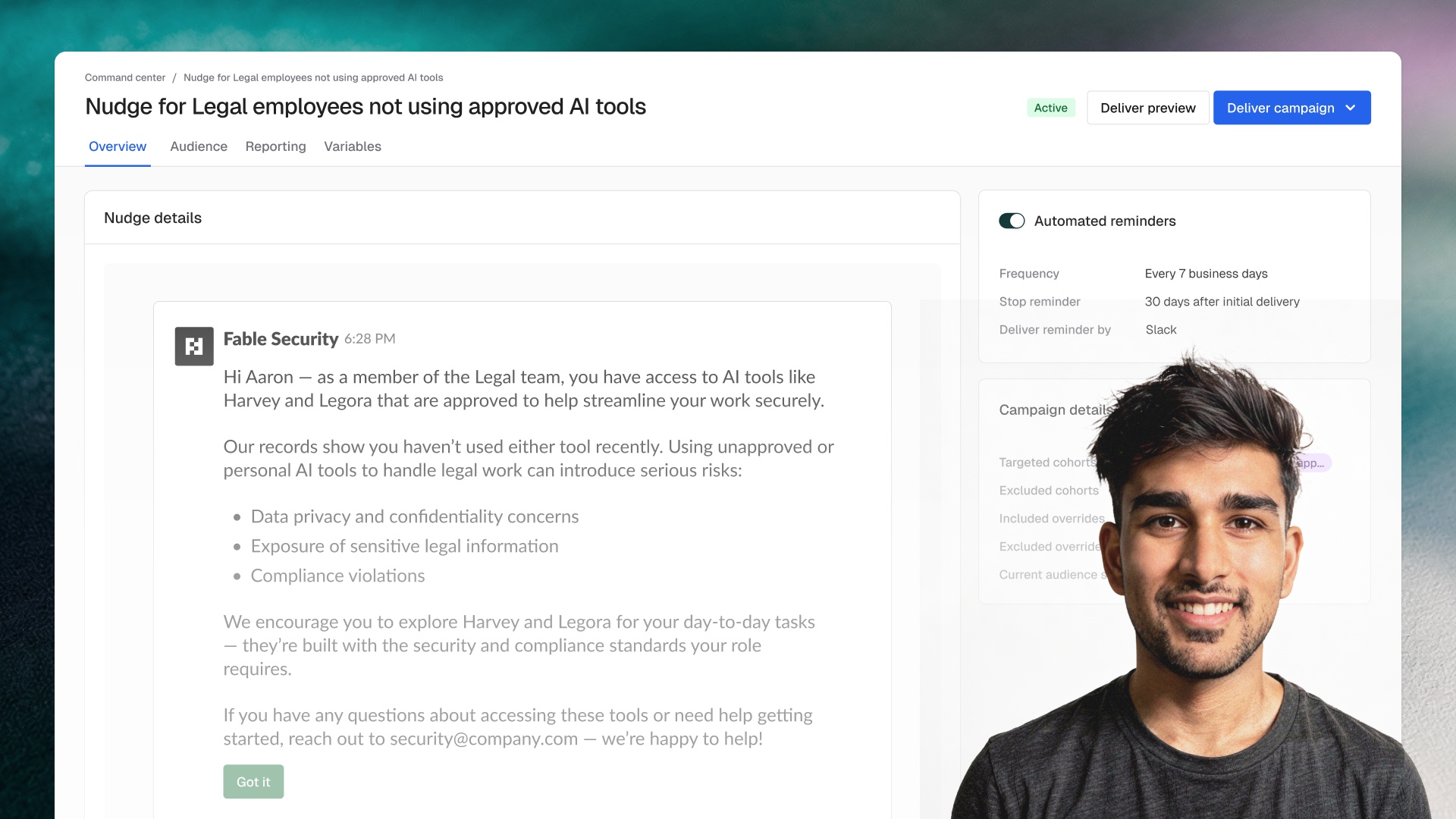

Use case 2: Detecting and correcting shadow AI usage

Shadow AI happens when sanctioned tools don’t fit the job and someone pastes a customer contract into an AI tool’s unsecure free tier. The numbers from last week’s post still capture the scale of it most clearly: at the average organization, 223 generative AI data policy violations every month, and 47% of employees using AI are doing it on personal accounts your DLP cannot see.

Fable’s approach to shadow AI works in four steps:

- Detect in near real time using signals from the tools your security team has already deployed. PII headed into unsanctioned LLMs, visits to banned sites, sensitive uploads to personal accounts.

- Resolve the signal to the employee.

- Intervene in the moment. Here’s why this isn’t sanctioned. Here’s the approved tool to use instead. Here’s a 90 second walkthrough.

- Watch the behavior. If it doesn’t change, escalate with a stronger touch, or pull the security team in. The platform already has the escalation paths built in.

Detect, educate, verify. Automatically, at the employee level, every day. Coaching, not URL blocking.

Use case 3: Educating employees on your AI policies

Most AI policies are multi-page PDFs that exist to satisfy auditors, not to change how a developer works on a Tuesday afternoon. Distributing the PDF to 5,000 people doesn’t work. Neither does an annual training.

Fable’s policy boost takes the PDF and grounds every AI related briefing in it. New tool rollouts, general AI policy overviews, shadow AI interventions. The script pulls language, examples, and edge cases directly from your policy: your SSO provider, your sanctioned tools, your data handling rules.

The many pages become a one-minute video. The developer it’s meant for finishes it. Employees feel empowered, not blocked.

Measuring sanctioned AI adoption, not training completion

The fourth lever from last week was the one most security leaders still don’t have a clean answer for: measure sanctioned adoption, not training completion.

Because every Fable intervention is correlated to an individual employee and tied back to a behavior signal, the same platform running the three use cases above is also telling you whether they’re working. Which tools your workforce is actually reaching for. Which interventions changed behavior. Which cohorts still need another touchpoint.

Today, roughly 1 in 3 Fable customers is running at least one of these use cases in production. And the feedback is consistent: it feels less like a security tax, and more like an enablement engine.

Built for the next problem, too

If your team is somewhere on the spectrum of we wrote a policy but no one reads it → we have no idea what employees are pasting into LLMs, this is exactly what we built Fable for.

But the bigger point lives one level up. Fable was built from a first principles view of potentially risky human behavior. That’s why pointing it at AI adoption was a natural next step, not a new product. And it’s why the platform is designed to address the next human risk, or the next business imperative your employees need to operate against, whatever it turns out to be.

When the next big risk lands, will your security stack already be built to absorb it? Or will your vendors be scrambling to ship another point product to chase it?

Register for the live roundtable

Join Jacob Berry (Field CISO, Fable Security), John Yeoh (CSO, Cloud Security Alliance), and Steve Tran (VP of Global Security, Iyuno) for a live conversation on what the department of how actually looks like once you have to operationalize it.