Day 8 of 12 days of riskmas (or, if you prefer, risk-mukah or the non-denominational risk-ivus)

The TL;DR

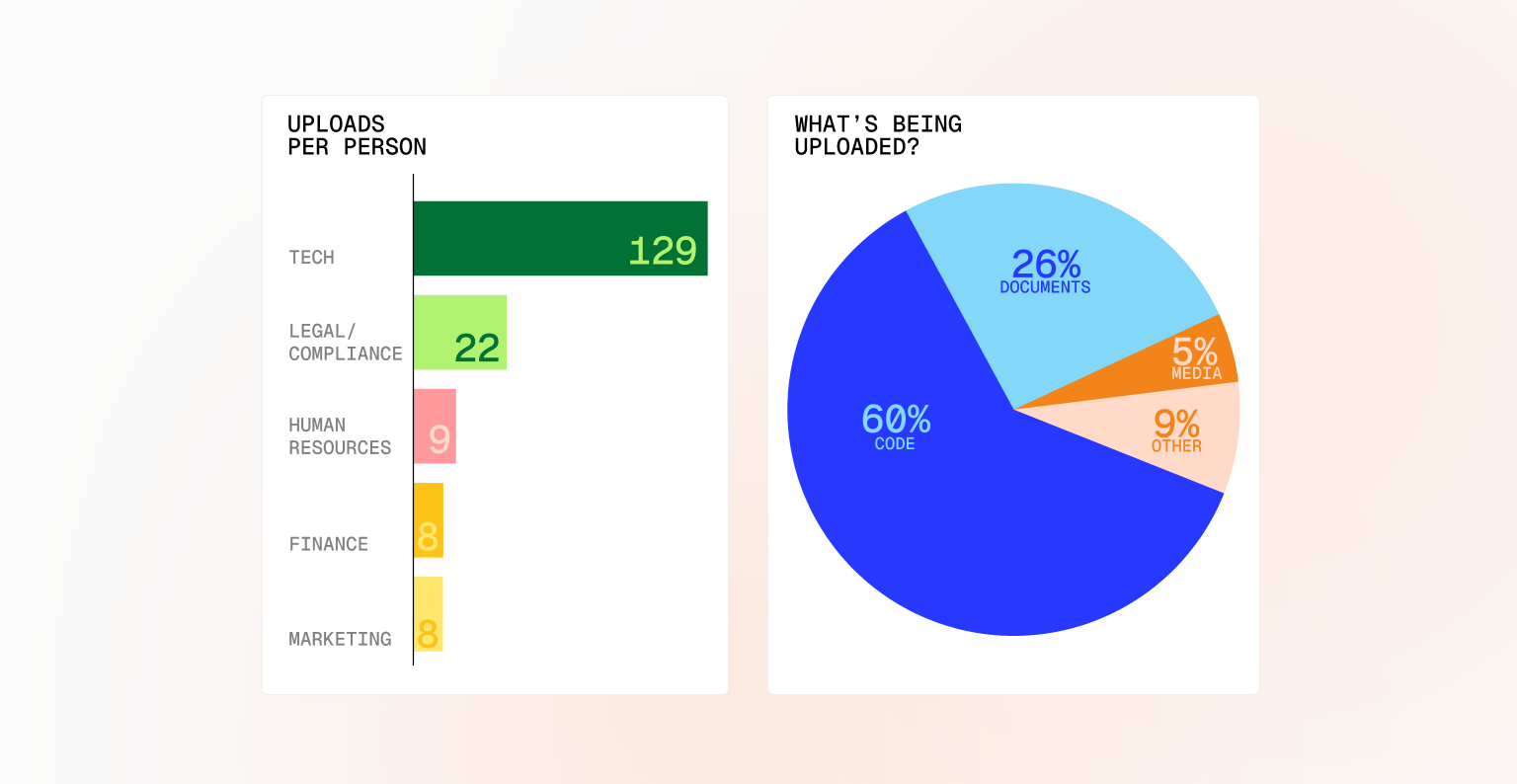

- People are uploading real work into generative AI apps

- In one company, the tech team leads, with legal/compliance a distant second

- Code dominates uploaded content (60%), followed by documents (26%)

Who’s sailing closest to the edge with generative AI? That’s the question security leaders are asking as AI tools slip into everyday workflows. In this slice of our human risk report, we examine which cohorts inside a single organization uploaded the most content to generative AI tools over a six-month period, and what kind of data they shared. The goal wasn’t to point fingers, but to understand behavior at scale—because that’s where risk lives.

This early picture is revealing. When teams use generative AI, they don’t just experiment with prompts or harmless examples. They upload real work. Real artifacts. Things like board decks (ai ai ai), financial models, and code, code, and more code.

As our customers ingest richer telemetry from security tools, this view will sharpen. With tools like SASE, leaders can distinguish between uploads to sanctioned versus unsanctioned AI applications, and Fable will be able to target cohorts of employees who only upload content to unsanctioned applications, where the risk is significant. Or they’ll be able to refine even further and only target those who upload content to an unsanctioned application when it triggers a DLP violation. So stay tuned on this topic.

So who’s loading the most content today? In one customer environment, the technology team led any other group by a wide margin with an average of 129 uploads per person over a six-month period. That may not be surprising—engineers are often early adopters—but the second-place finisher raises eyebrows. Legal and compliance teams ranked next (with an average of 22 uploads), underscoring how quickly AI has permeated even the most risk-aware functions.

The content itself tells an equally important story. Code accounted for 60% of uploads, followed by documents at 26%. Media made up 5%, with the remaining 9% falling into a mixed “other” category. Each file type carries its own exposure, from intellectual property leakage to regulatory risk. Together, they paint a clear picture: generative AI is already embedded in critical workflows.

This is where security programs must evolve. The question is no longer whether employees are using generative AI, but rather how, where, and with what data. Organizations that can map human behavior to AI usage in real time won’t just reduce risk, but gain the clarity needed to let people move fast but also help them stay out of dangerous waters.

Check us out tomorrow for a look at toxic combinations.