Day 7 of 12 days of riskmas (or, if you prefer, risk-mukah or the non-denominational risk-ivus)

The TL;DR

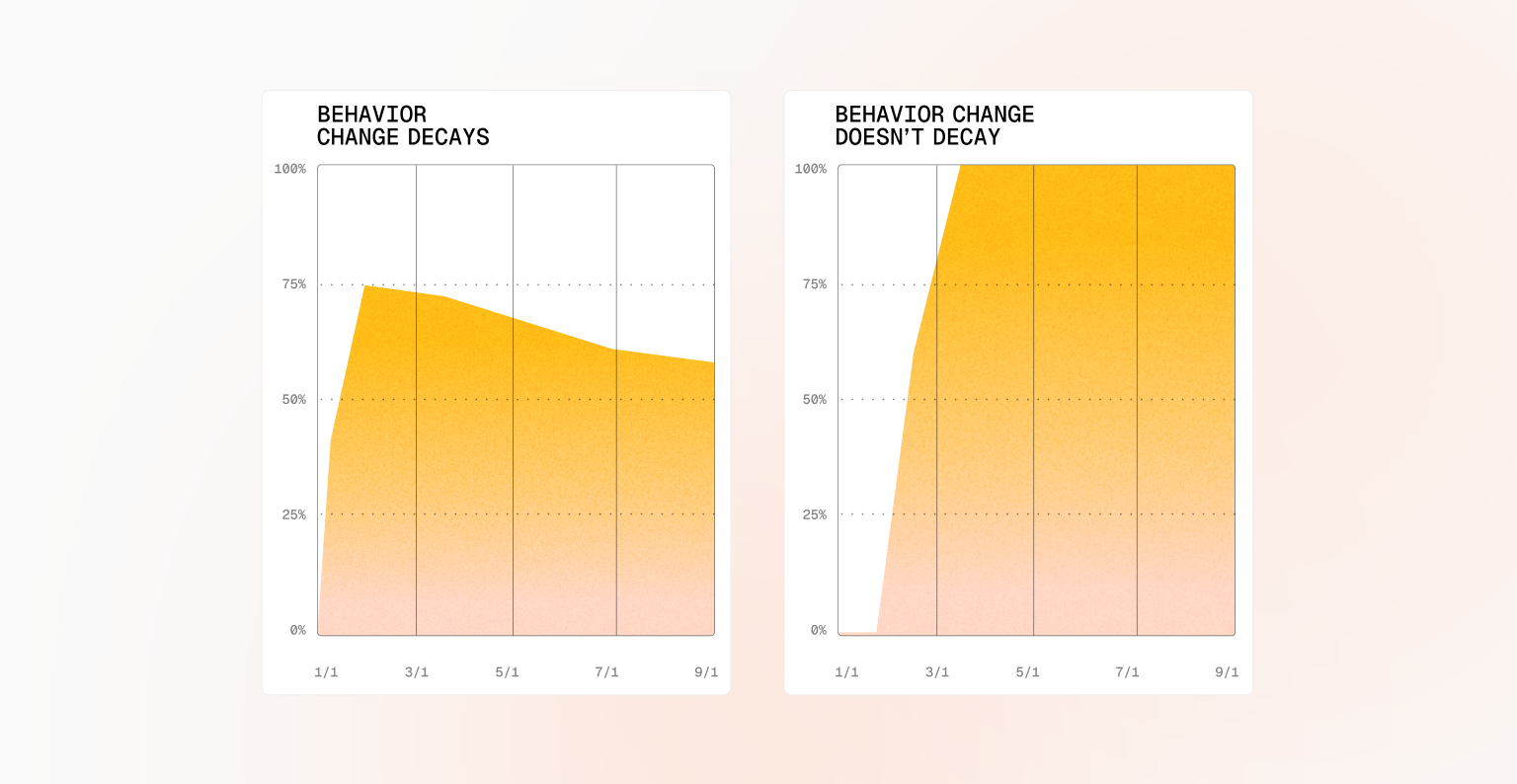

- Behavior change can fade after your security campaign

- The behavior decay interval measures how long improvements actually last

- Point-in-time metrics hide slow drift back to risky habits

- We think relevant, clear guidance drove lasting change in one example campaign

- Ongoing monitoring enables timely intervention before risk returns

Does security behavior change actually stick? That’s the question the behavior decay interval is designed to answer.

The behavior decay interval measures the staying power of a security campaign—how quickly people revert to old habits once the initial attention fades. Without this lens, you may think mistake short-term improvement for lasting progress.

In some programs, behavior improves briefly and then tapers off as people get busy and distracted. This kind of decay is easy to miss if you only look at point-in-time metrics. What matters more is the slope: are behaviors holding steady, or slowly drifting back toward a risky threshold?

At Fable, we care intensely about all of the factors that go into how you change behavior, how quickly you can change behavior, and how long that change lasts.

The visual below shows two campaigns—one where behavior change began to decay, and another where the change held steady (for at least the last 11 or so months, fingers crossed!). In the right-hand example, developers were inadvertently logging PII to an observability tool. Clear, timely guidance explained what went wrong and exactly how to fix it. The result was 60% compliance in the first two months—and full compliance thereafter. Our strong hunch is that the difference wasn’t volume or repetition, but relevance and clarity. That said, we’re looking across all campaigns and will no doubt have more to say on this topic.

It’s not always obvious why one campaign sticks and another fades. That’s why monitoring behavior over time matters. By tracking decay intervals, teams can spot when performance drops below an acceptable threshold and intervene before risky habits fully resurface.

Behavior change isn’t a one-time event—it’s a system. Measure how long improvements last, watch for decay, and be ready to step in when needed. That’s how security programs move from short-term wins to durable, lasting impact.

Check us out tomorrow for a look at AI swashbucklers (it’s really just about uploading content to generative AI, but we wanted to use the word “swashbucklers”).