The TL;DR

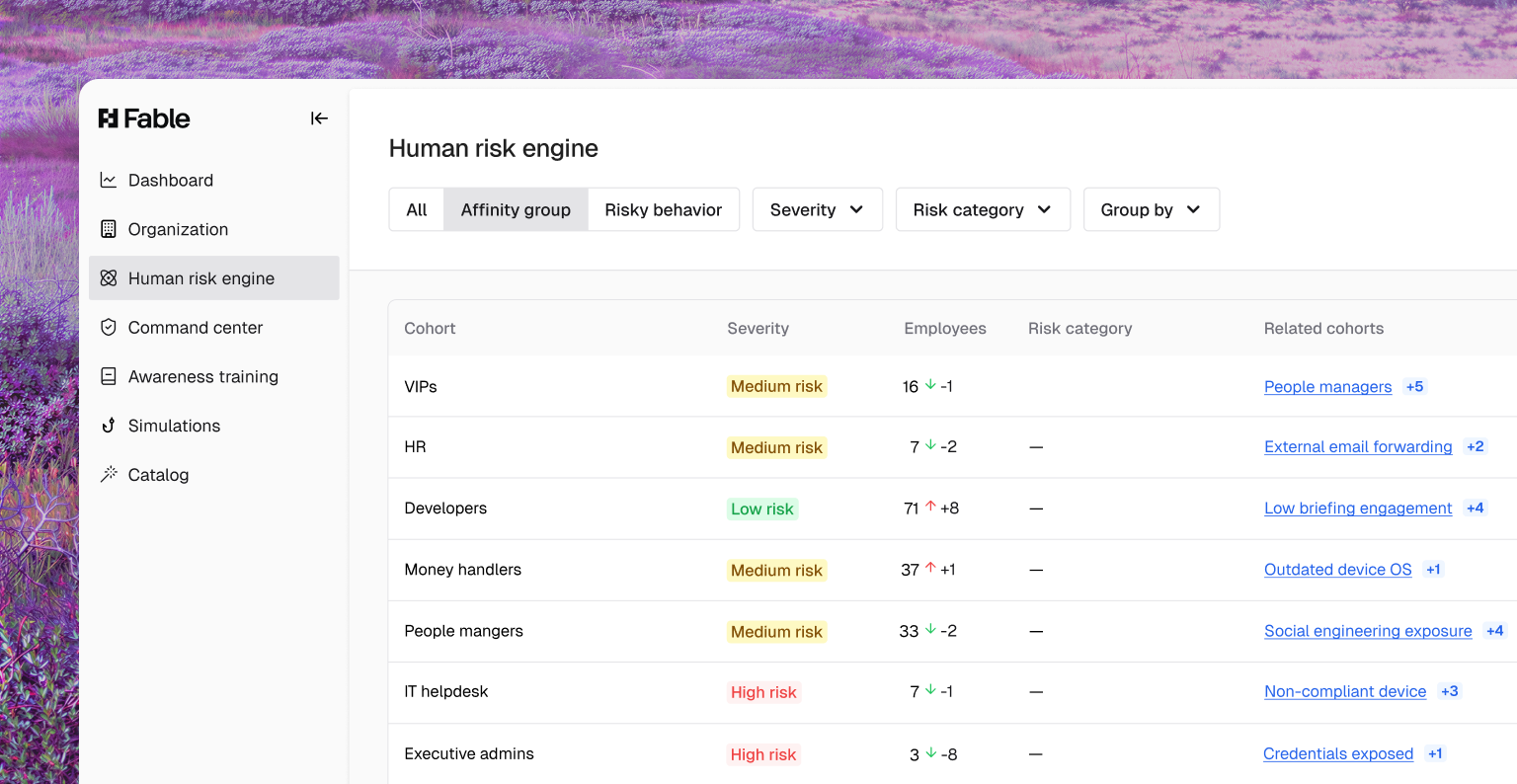

- Most “risk-based targeting” is really just role-based targeting with assumed risk.

- True risk-based targeting responds to observed behavior.

- Security teams have a finite attention budget, and wasted training erodes impact.

- Targeting the few who actually cause risk drives better outcomes and trust.

A hot topic in human risk management is risk-based targeting. Everyone knows one-size-fits-all security training is yesteryear, and there’s even a fair body of evidence that it may have the opposite effect than intended. Lots of vendors claim to target risk, but few actually do it. What they really mean is role-based targeting.

To be clear, role-based training is a good thing. It shows employees what “good” looks like at their company, and—delivered in a relevant and specific way—serves as an excellent training starting point. Lots of our customers brief, say, the finance team on an emerging social engineering threat targeting them. Or deploy a particular type of phishing simulation just developers based on their familiar tools. But if your human risk management vendor tells you this is “risk-based targeting,” I’d say what they’re really talking about is just role-based targeting with assumed risk layered on top—not based on actual observed risk. The distinction may sound academic, but in practice it has real consequences for effectiveness, trust, and attention.

Here’s an example of assumed risk: engineers receive training on securing API keys or following cloud storage best practices. These are reasonable guesses, and they’re not wrong. Any solid security content library should absolutely include this material. The problem is that the targeting itself is static. It’s driven by who someone is in the org chart, not by what they’ve actually done. Risk is inferred, not observed. And the bigger question is how many developers do you know that have tolerance for training on all the potential topics they might encounter before they start getting training fatigue?

Security awareness leaders know the truth: they have a finite amount of attention to make use of. Every unnecessary alert, notification, or, yes—training module—spends just a little bit of that budget. So, anything they send better pack a punch. In one customer, a financial technology company, the security team detected a specific data-handling behavior: their Splunk instance was showing PII violations. They traced them back to Datadog, and then to a bad parser, which about 150 of their nearly 1,000-person engineering team was using. Instead of broadcasting a generic warning to the entire engineering organization, they targeted the 150 with a 90-second Fable video briefing. It was crisp, to the point, highly specific, named the tools, named the violation, and gave a precise call-to-action. The result was they cut those violations by 60% within a month, and then to 0% in the months following, with zero recidivism. The other benefit? All the people who weren’t logging PII didn’t get the briefing. The company interrupted fewer people, preserving others’ attention for issues that were genuinely relevant to them.

Also note the qualitative difference in how these messages land. Sending content about a risk someone might encounter (“Make sure you protect personal data”) feels generic and easy to tune out, especially if it doesn’t map to anything concrete in the recipient’s day-to-day work. By contrast, content that reflects an observed behavior (“You inadvertently logged sensitive data in cleartext to Datadog”) is specific, credible, and hard to ignore. It moves security guidance from the abstract into the real world, where learning actually sticks.

Role-based training is valuable, and there’s a place for it in your human risk management content line-up. It gives employees that “wanted poster,” so they’re reminded what behaviors to steer clear of, but true risk-based targeting starts with behavior, not assumptions. When we anchor targeting in what’s actually happening in the environment, we respect people’s time, deliver highly-specific guidance, increase the impact of our interventions, and build trust that security messages are sent for a reason. In a world where attention is scarce, that makes all the difference.