TL;DR—

Part two of six-part blog series on must-ask questions when creating net-new awareness training.

- Always start with “Why this training, now?”

- Possible answers include:

- Trending headline response

- New threat intelligence or security research

- Risky behavior patterns

- Recent security incidents or near-misses

- See “Five must-ask questions for security training that changes employee behavior” for more questions Fable Security asks our clients before creating short-yet-impactful briefings!

Stopping to ask “why?” can speed your time to security training.

While this question may sound obvious, “Why this, now?” is the question we most often ask our clients after receiving a custom security training request.

Depending on how clients answer, they sometimes don’t actually need a training course.

Instead, a reassuring “we’re covered!” nudge to employees panicking over the latest trending threat might work just as well as a custom briefing.

Other times, there’s no action that a specific employee cohort could take to mitigate the relevant threat.

(For example, how often are your customer service staff patching Netscaler gateways, despite the breathless headlines about CitrixBleed2 exploitations?)

And, we can sometimes adapt pre-existing security awareness briefings to discuss specific attacks that reuse common tricks with a new coat of paint.

The “EvilAI” campaign, for instance, reuses the same infrastructure and general attack pattern as other malvertising and SEO poisoning campaigns; its lures are just reskinned for anyone looking for a Gen AI-related productivity app.

How your “why” changes your security training approach.

So, what’s your reason to want a new security awareness briefing or training module? Did you:

- Saw a headline somewhere?

- If so, did you want to create a briefing to proactively warn your users before attackers try it with your employees

- Or, did you want to send out a notice to reassure your executives and employees that your organization is currently protected against the threat?

- Read a threat intel report that made your stomach drop

- If so, consider what specifically your general employees could do to spot a new phishing lure or threat, versus what only your IT administrators or security personnel need to know.

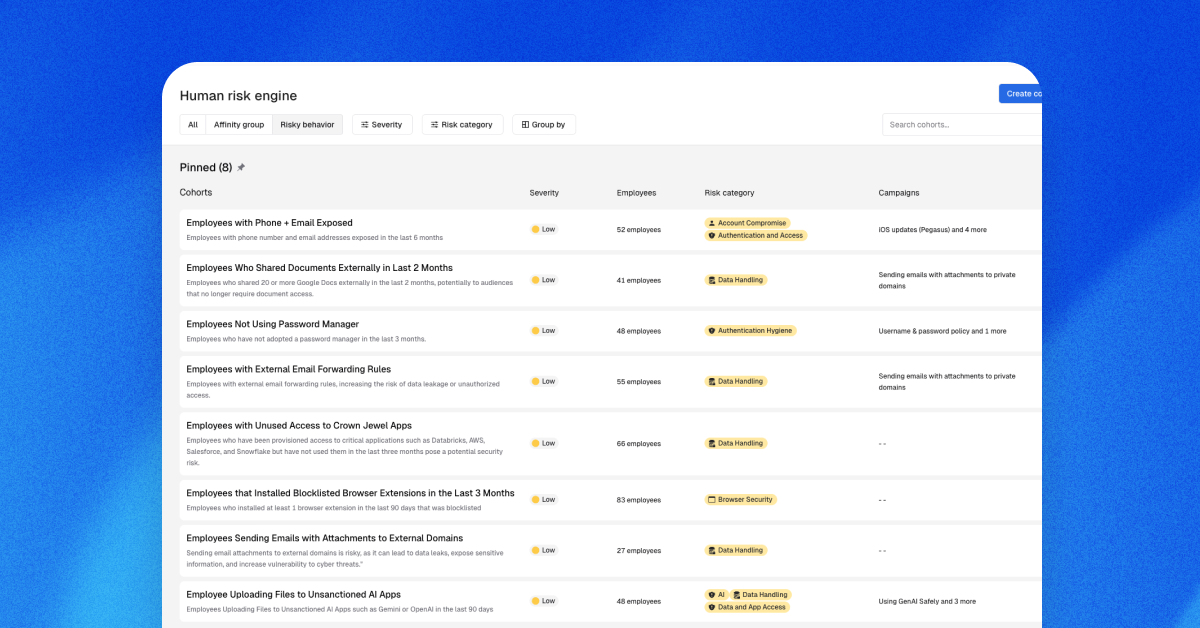

- Noticed a risky behavior pattern?

- If so, is that pattern currently trending upwards?

- Or, are you being proactive to keep the behavior from getting worse?

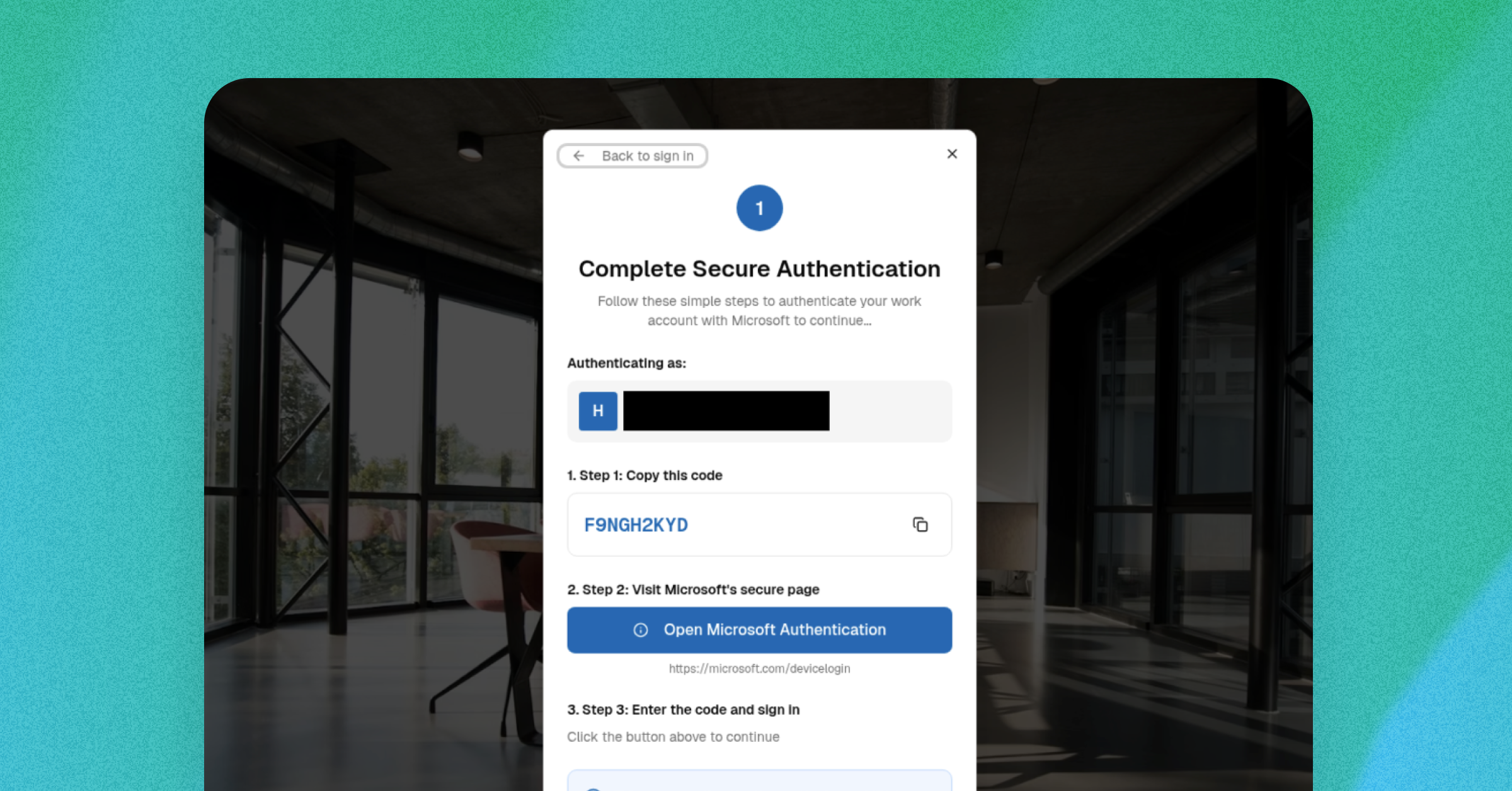

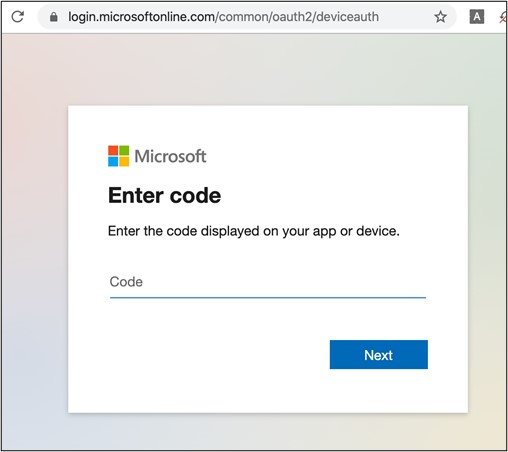

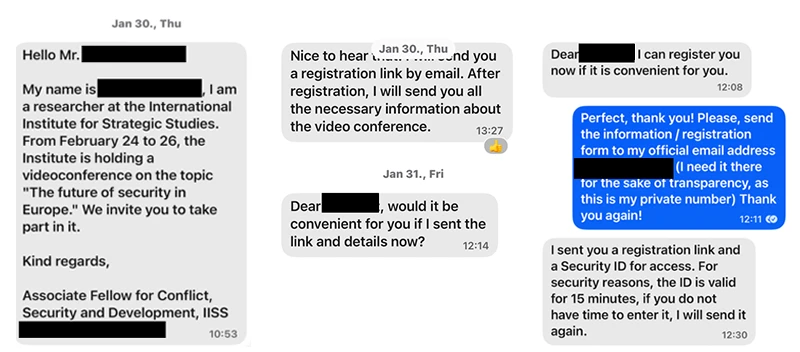

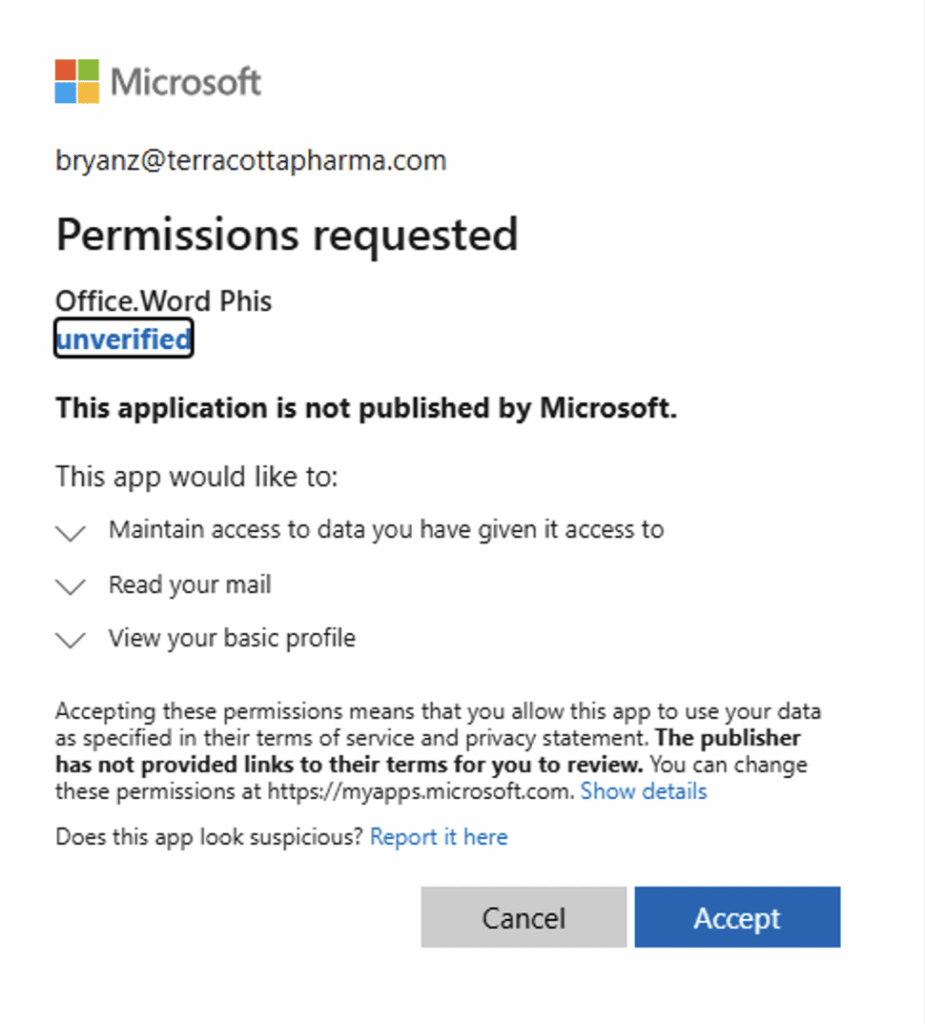

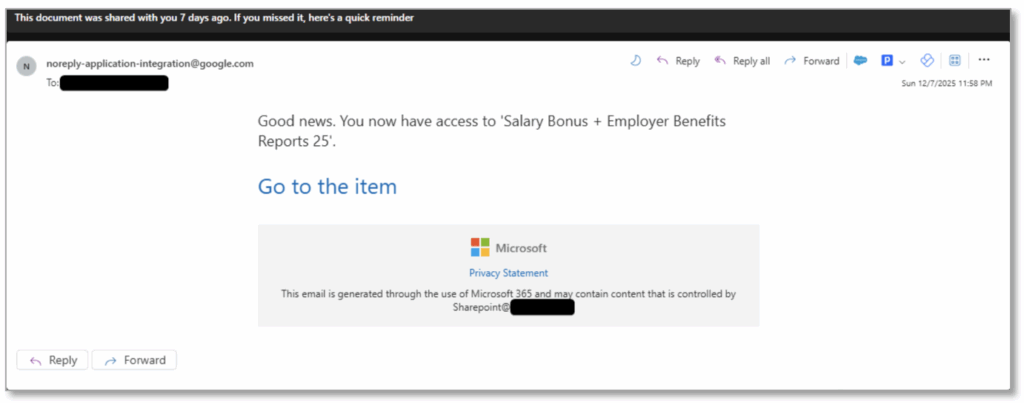

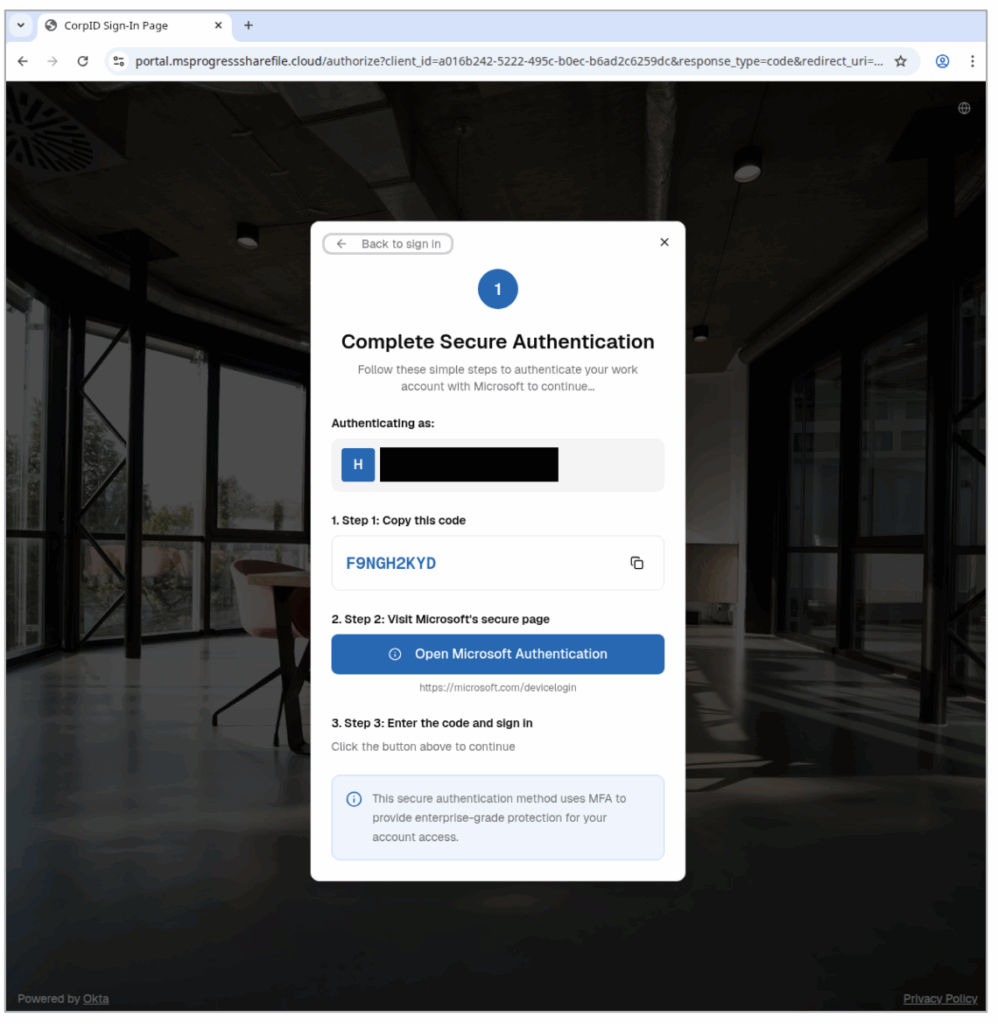

- Recently dealt with an internal security incident or near-miss?

- If so, how many details could you include to reassure the recipients that it’s handled, while keeping the briefing realistic to avoid future incidents?

- Extra credit if you can include screenshots of the phishing lure, malicious pop-up, or any other artifact someone might see on the front end of an attack!

If this training request was prompted by an external blog or report, we’ll usually ask to see it. Not because we want to copy it, of course, but because it helps anchor the training in reality.

After all, people are much more likely to change their behavior when faced with concrete evidence of actual impacts, rather than hypothetical “this could happen” bad vibes.

We’re diving deep into all five questions we ask our clients and why, so sign up to get each blog as it comes out.

(And, if you want more ideas on fostering a positive, employee-friendly security culture, check out page 17 of “Modern Human Risk Management for Dummies”.)